Artificial intelligence is often described as a technological revolution.

In reality, it may represent something far deeper: a transformation in how societies produce, organize, preserve, distribute, and ultimately govern knowledge itself.

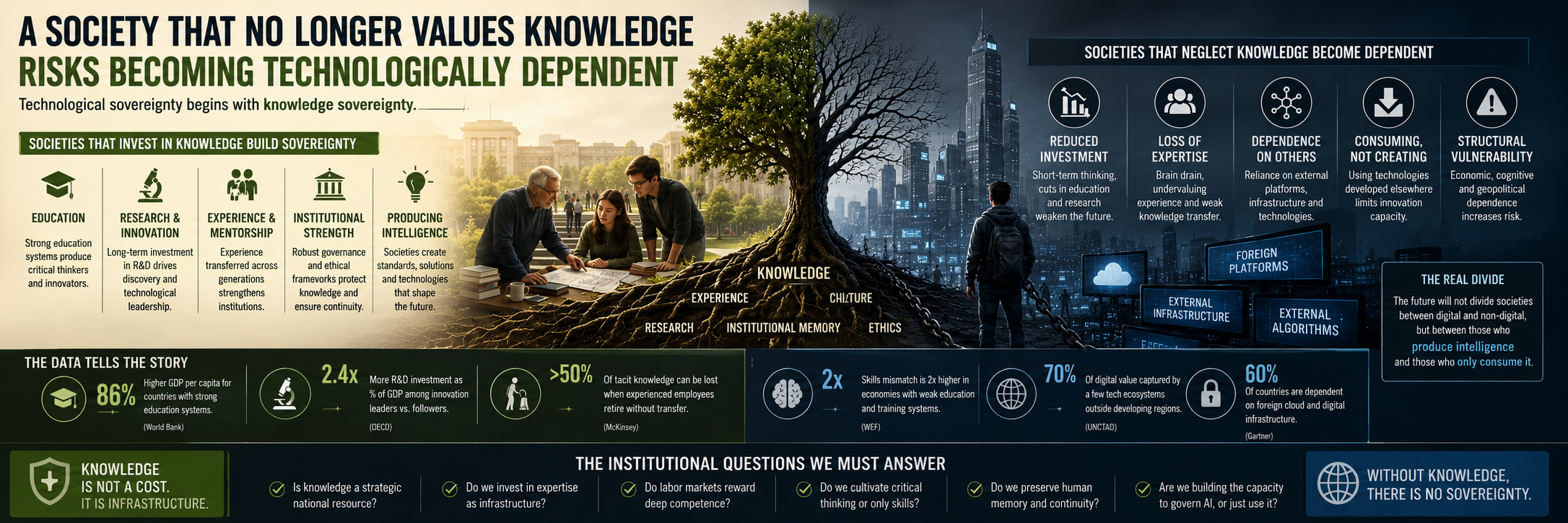

This distinction matters because technology alone rarely changes civilizations. What changes civilizations are the structures through which knowledge is accumulated, transmitted, institutionalized, and converted into power.

For decades, many advanced economies progressively shifted away from viewing expertise as strategic infrastructure. Specialized competencies became increasingly expensive rather than essential. Long-term research was often perceived as slower and less attractive than immediate operational efficiency. Educational systems were gradually evaluated through short-term economic metrics rather than through their ability to cultivate critical reasoning, scientific depth, historical awareness, or institutional continuity.

At the same time, labor markets increasingly rewarded adaptability over mastery.

Organizations learned to optimize flexibility:

lower labor costs,

faster turnover,

simplified organizational structures,

outsourcing of competencies,

dependence on external platforms,

reduction of internal research capabilities,

and gradual erosion of long-term expertise.

Initially, this model appeared rational and economically efficient.

Highly specialized professionals were often seen as rigid costs rather than strategic assets. Experience became difficult to quantify in quarterly reports. Institutional memory became secondary to immediate execution.

But artificial intelligence changes the equation entirely.

AI systems do not reduce the importance of knowledge. They radically amplify the strategic difference between societies capable of generating knowledge and societies limited to consuming technologies developed elsewhere.

This emerging divide may become one of the defining geopolitical fractures of the twenty-first century.

Countries capable of building advanced competencies in:

AI research,

data governance,

cybersecurity,

scientific infrastructure,

computational architectures,

advanced education,

cognitive industries,

and institutional knowledge systems,

are not merely adopting tools. They are shaping the future operating systems of economies, governments, organizations, and social coordination itself.

Others risk becoming dependent ecosystems.

And this dependency is not limited to software or hardware.

It becomes cognitive.

A society that gradually loses the ability to cultivate deep expertise eventually loses its capacity to interpret complexity independently. It becomes structurally dependent on external infrastructures, external algorithms, external data ecosystems, external cloud providers, and eventually external models of reasoning and decision-making.

Over time, even strategic autonomy becomes fragile.

The danger is subtle because generative AI creates the illusion that knowledge has become universally accessible. Information appears instantaneous. Answers appear abundant. Complexity appears compressed into conversational interfaces.

But access to outputs is not equivalent to possessing understanding.

This may become one of the great misunderstandings of the AI era.

AI can synthesize information, generate text, write code, produce recommendations, and automate analytical processes. Yet none of this eliminates the need for human systems capable of validating, contextualizing, governing, interpreting, and strategically applying knowledge over time.

Artificial intelligence can accelerate cognition.

It cannot independently produce wisdom, institutional continuity, ethical proportionality, or historical judgment.

These dimensions emerge from education, culture, scientific ecosystems, social memory, and accumulated human experience across generations.

This is why the role of universities, research centers, public institutions, scientific communities, and experienced professionals becomes even more central in the AI transition – not less.

Paradoxically, many societies are entering the AI age while simultaneously weakening the very infrastructures required to govern it responsibly.

In many sectors, tacit knowledge accumulated across decades is quietly disappearing.

Experienced professionals retire without structured knowledge transfer. Young workers increasingly navigate fragmented and unstable labor markets. Long-term mentorship declines. Institutions prioritize operational continuity over knowledge continuity. Expertise becomes diluted into temporary workflows, outsourced functions, and highly accelerated organizational cycles.

At the same time, AI systems are progressively integrated into domains requiring more contextual understanding rather than less:

healthcare,

governance,

education,

finance,

justice,

defense,

urban systems,

infrastructure management,

and public administration.

This creates a profound paradox.

The more societies automate, the more they require uniquely human depth:

historical memory,

interdisciplinary reasoning,

ethical interpretation,

long-term thinking,

institutional awareness,

and the capacity to understand second-order consequences beyond immediate optimization.

The AI transition therefore risks exposing a dangerous contradiction within many contemporary economies.

For years, societies optimized for speed.

But resilience often depends on continuity.

They optimized for flexibility.

But strategic autonomy depends on accumulated competence.

They optimized for immediate productivity.

But civilizational stability depends on preserving knowledge across generations.

This tension becomes particularly visible when considering older generations.

In many technological narratives, elderly professionals are implicitly framed as less adaptive or less digitally aligned. Yet older generations frequently carry forms of knowledge that remain extremely difficult to formalize into datasets or algorithmic systems.

They preserve organizational memory.

They remember systemic failures.

They understand long historical cycles, institutional evolution, cultural transformations, and the unintended consequences of political and technological decisions.

Artificial intelligence can process archives.

But lived experience provides proportionality and judgment.

A society that marginalizes experienced individuals risks losing invisible layers of cognitive infrastructure that no AI model can fully reconstruct.

The disappearance of experienced professionals therefore represents more than demographic change.

It can become a form of institutional amnesia.

And institutional amnesia is particularly dangerous in periods of technological acceleration.

This is why the future may not simply divide societies between “digital” and “non-digital.”

The deeper divide may emerge between:

societies capable of producing intelligence,

and societies limited to consuming it;

societies capable of governing AI,

and societies forced to adapt to systems designed elsewhere;

societies preserving knowledge sovereignty,

and societies progressively dependent on external cognitive infrastructures.

The implications are not only economic.

They are geopolitical, cultural, educational, and institutional.

Technological dependency eventually influences:

political autonomy,

labor structures,

educational priorities,

industrial capacity,

media ecosystems,

and even the way societies define truth, expertise, and legitimacy.

For this reason, the AI discussion should never be reduced to tools alone.

The real challenge is institutional architecture.

- Do societies still consider knowledge a strategic national resource ?

- Do organizations invest in expertise as infrastructure rather than short-term cost ?

- Do labor markets reward deep competence, or only permanent adaptability ?

- Do educational systems cultivate critical thinking and intellectual depth, or merely operational functionality ?

- Do institutions preserve human memory, or continuously reset themselves through accelerated short-term cycles ?

- Do governments understand that AI leadership is inseparable from educational, scientific, and cultural investment ?

Artificial intelligence is forcing these questions back to the center of public life.

And perhaps the most important lesson of the AI era is this: Technological sovereignty begins with knowledge sovereignty.

A society that no longer values knowledge may initially appear efficient. But over time, it risks becoming dependent on those who still do.